MinD-Video: A Video Generative Tool That Uses Augmented table Diffusion

You could soon see your brain scans in the form of Instagram Reels, TikTok or YouTube Shorts…

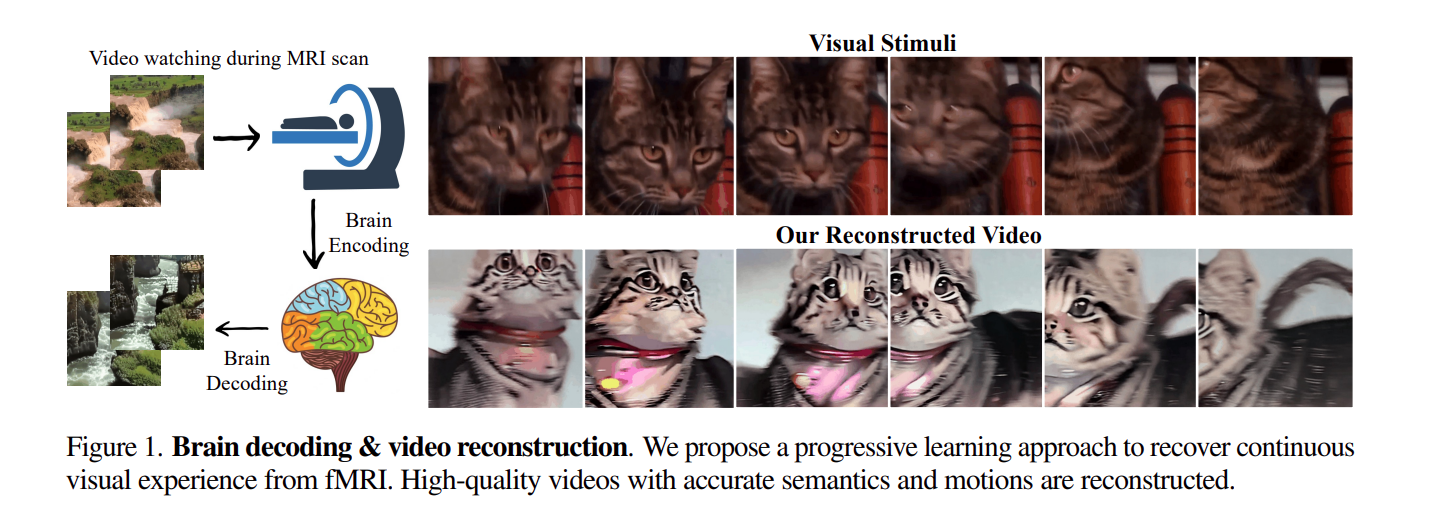

Planning a dream holiday and your mind is fixed on the Swiss Alps already? Well, soon, AI could talk to your mind! Three AI researchers have proposed a new high-quality video reconstruction model called MinD-Video based on augmented Stable Diffusion generative AI. MinD-Video is a state-of-the-art AI model that can accurately reconstruct videos with near-perfect matching with semantics, motions and scene dynamics.

MinD-Video can be fine-tuned extensively using non-invasive brain recordings from continuous functional Magnetic Resonance Imaging (fMRI) data. Artificial Intelligence can soon recreate your thoughts and emotions using contrastive learning data of your brain scans. It would be a great way to extend the current scope of sentiment analysis and customer experience management using Generative AI techniques for videos, music, images and text. The model could be used to solve many questions that exist in the study of cognitive neuroscience. By acquiring non-invasive fMRI data, the AI researchers have managed to demonstrate that co-training with augmented Generative AI tools such as Stable Diffusion can capture, construct and reconstruct even the faintest of human perception, and project it as a high-quality video format.

Top News; Gartner Data & Analytics Summit 2023: A Brief Day-by-Day Overview

How is MinD-Video different?

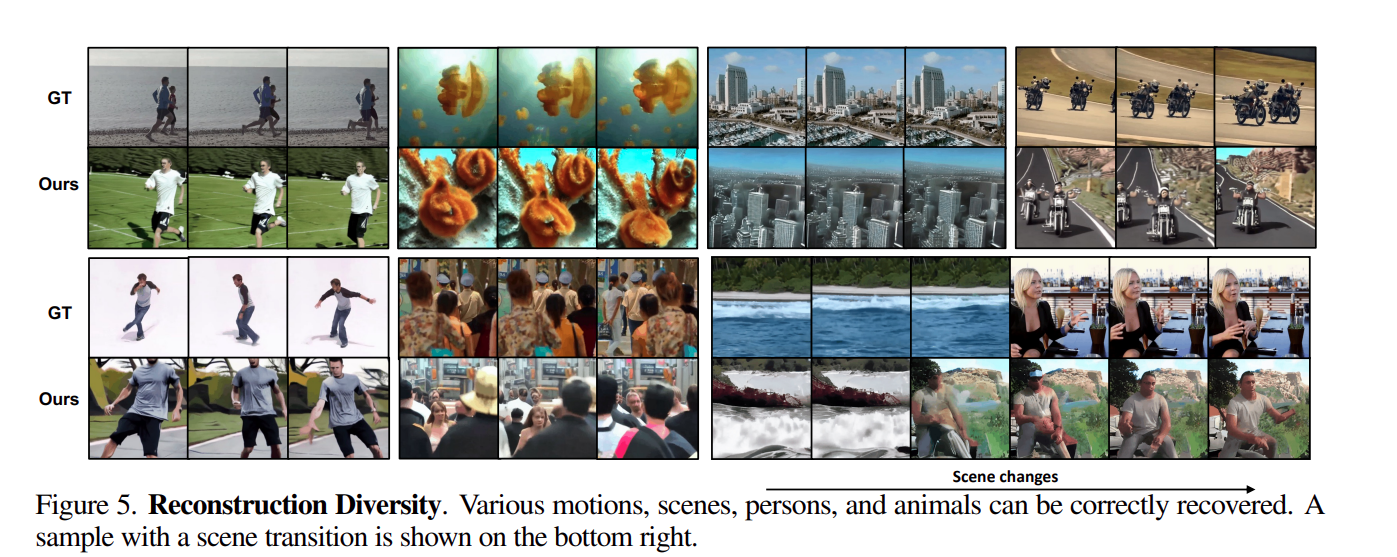

MinD-Video is trained using dynamic data, instead of static images. It can reconstruct video patterns using a continuous stream of data with advanced fMRI-annotation pairs for detection of context, scenes, motions and objects. The new model uses a two-module pipeline:

1) fMRI encoder for trainer

2) Augmented stable diffusion model for co-training and fine-tuning

These two modules simplify the fMRI’s temporal resolution, which in turn, shortens the gap between the image and the video brain decoding. The augmenting nature of this model progressively learns from brain signals through multiple stages, before generating a scene-dynamic video with near-frame attention.

It must be noted that the fMRI encoder is a pre-trained model that learns the general attributes of the visual cortex. By using Stable Diffusion for co-training, fMRI datasets are further combined with Masked Brain Modeling (MBM) and Contrastive Language-Image Pre-Training (CLIP). This space is enriched with semantic information to improve understandability of video frames by the generative model during conditioning.

An interesting point with MinD-Video is its untapped potential –the model uses less than 10% of the BOLD Signals or Voxels for video reconstructions. For a very small data set, it managed to achieve 85% accuracy in semantic classification tasks. More than 90% of the brain data is still waiting to get pulled into the fMRI embedding. We can soon have LLMs built on MinD-Video like models which could change the way market research companies analyze sentiments of users watching visual content.

Comments are closed.