LOGIQ.AI Announces LogFlow Observability Data Pipeline as a Service

Being SOC2-compliant, LogFlow keeps data security and integrity front and center. LogFlow’s InstaStore and built-in insurance solves data risks associated with machine data pipelines

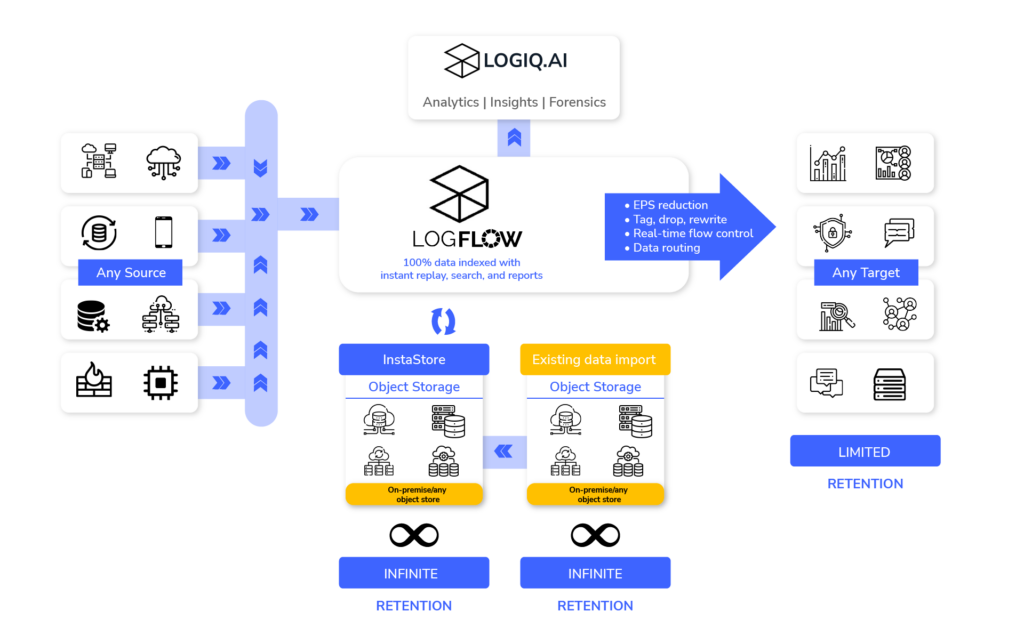

Global data creation and replication is growing at a CAGR of 23% (IDC), and 70% of organizations will shift focus to provide more context for data analytics (Gartner). New tooling is needed to optimize data volume and improve data quality. LogFlow solves these data challenges at both the core and the edge.

Greg O’Reilly, Observability Consultant at Visibility Platforms, said, “LogFlow enables our customers to take a whole new approach to observability data; one that helps regain control and unblock vendor or cost limitation. We’re opening up discussions between ITOps and Security teams for the first time with a unified solution that keeps data secure, compliant, manageable, and readily available to those who need it on the front lines.”

![LogFlow by LOGIQ.AI in action]() The Block and Drop problem

The Block and Drop problem

Contemporary data pipelines suffer from ingest-egress mismatches. “Enterprises have unfortunately been sold “block” and “drop” as intelligent features to counter back pressure and upstream unavailability in data pipelines”, said Ranjan Parthasarathy, Co-founder, CEO of LOGIQ.AI. “Block and drop is data loss in disguise. Imagine losing a vital signature in your log stream that points to impending ransomware starting to spread. Don’t introduce new business risks by buying into block and drop.”

Recommended AI News: Students and Educators Invited to Learn About a Day in the Life of an IT Pro

InstaStore

LogFlow eliminates block and drop by storing 100% of streaming data in InstaStore, a storage innovation that enables object storage as primary storage. In InstaStore, data is fully indexed and searchable in real-time. LogFlow also stores its indexes in InstaStore, giving a genuinely scalable platform with cleanly decoupled storage and compute. LogFlow ingests data even when upstream targets are down. Due to its indexing capabilities, it provides fine-grained data replays.

“InstaStore introduces a new paradigm for data agility that eliminates data loss and the need for storage tiering and data rehydration. Organizations can now unlock productivity, cost reduction, and compliance like never before.”, said Jay Swamidass, Head of Sales – APAC and EMEA at LOGIQ.AI.

Accelerating machine intelligence

LogFlow’s native support for open standards makes it easy to collect machine data from any source. Similar to network flows, LogFlow manages data with its flow-level routing table.

“LogFlow filters unwanted data and detects security events in-flight. Users can route streams, control EPS and run fine grained data replays.”, said Tito George, Co-founder of LOGIQ.AI. “InstaStore’s indexing and columnar data layouts enable faster querying, unlike archive formats like gzip.”

Data routing isn’t the real problem

Open-source tools like Fluent Bit and Logstash can already route data between various sources and target systems and allow routing raw archives to object stores. The complex problems to solve are:

1) Controlling data volume and sprawl

2) Preventing data loss.

3) Ensuring data reusability with fine-grained control; and

4) Business continuity during upstream failures.

Recommended AI News: Collibra Announces Investment from Snowflake to Expand Data Intelligence for Snowflake Data Cloud

Theodore Caroll, Head of Sales – Americas at LOGIQ.AI said, “There’s no technical reason to accept anything less than 100% data availability. Your data is your only true fortress in responding to threats and adverse business events. Businesses need a system like LogFlow that ensures full data replay is continuously and infinitely available.”

LogFlow’s log management and SIEM capabilities provide built-in insurance against upstream failures. Businesses can run it in parallel with their existing systems. If upstream systems become unavailable, LogFlow can continue to provide crucial forensics.

Built for the enterprise

LogFlow’s built-in “Rule Packs” have over 2000 rules that filter, tag, extract, and rewrite data for popular customer environments and workloads. LogFlow’s SIEM Rule Packs also allow security event detection and tagging.

LOGIQ.AI’s LogFlow brings complete control over observability data pipelines and delivers high-value, high-quality data to teams that need it in real-time, all the time. For the first time, organizations can fully control data collection, consolidation, retention, manipulation, and upstream data flow management.

Recommended AI News: Numonix Expands Compliance and Business Features for Its IXCloud Compliance Recording Service for Microsoft Teams

[To share your insights with us, please write to sghosh@martechseries.com]

The Block and Drop problem

The Block and Drop problem

Comments are closed.