NGC Containers Now Available for More Users, More Apps, More Platforms

At SC18, new multi-node containers, compatibility with Singularity, and NGC-Ready program make data science, AI and HPC more widely available than ever

Call it a virtuous circle. GPUs are accelerating increasing numbers of data science and HPC workloads. This has enabled a wide range of scientific breakthroughs, including five of this year’s six Gordon Bell Prize finalists. These advances boost mindshare — GPUs are featuring prominently in sessions, demos and new product offerings throughout SC18, taking place this week in Dallas.

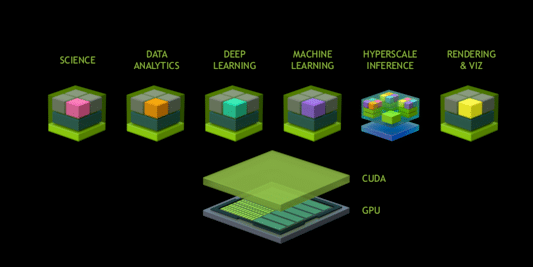

And we’re completing the loop by making it easier to deploy software from our NGC container registry. Its pre-integrated and optimized containers bring the latest enhancements and performance improvements for industry-standard software to NVIDIA GPUs. As the registry grows — the number of containers has doubled in the last year — users have even more ways to take advantage of GPU computing.

Read More: Palo Alto Networks Appoints Amit K. Singh as President

More Applications, New Multi-Node Containers and Singularity

The NGC container registry now offers a total of 41 frameworks and applications (up from 18 last year) for deep learning, HPC and HPC visualization. Recent additions include CHROMA, Matlab, MILC, ParaView, RAPIDS and VMD. We’ve also increased their capabilities and made them easier to deploy.

At SC18, we announced new multi-node HPC and visualization containers, which allow supercomputing users to run workloads on large-scale clusters.

Large deployments often use a technology called message passing interface (MPI) to execute jobs across multiple servers. But building an application container that leverages MPI is challenging because there are so many variables that define an HPC system (scheduler, networking stack, MPI and various drivers versions).

The NGC container registry simplifies this with an initial rollout of five containers supporting multi-node deployment. This makes it significantly easier to run massive computational workloads on multiple nodes with multiple GPUs per node.

And to make deployment even easier, NGC containers can now be used natively in Singularity, a container technology that is widely adopted at supercomputing sites.

Read More: Interview with Angel Gambino, CEO and Founder of Sensai

New NGC-Ready Program

To expand the places where people can run HPC applications, we’ve announced the new NGC-Ready program. This lets users of powerful systems with NVIDIA GPUs deploy with confidence. Initial NGC-Ready systems from server companies include:

- ATOS BullSequana X1125

- Cisco UCS C480ML

- Cray CS Storm NX

- Dell EMC PowerEdge C4140

- HPE Apollo 6500

- Supermicro SYS-4029GP-TVRT

NGC-Ready workstations equipped with NVIDIA Quadro GPUs provide a platform that offers the performance and flexibility that researches need to rapidly build, train and evolve deep learning projects. NGC-Ready systems from workstation companies include:

- HPI Z8

- Lenovo ThinkStation P920

The combination of NGC containers and NGC-Ready systems from top vendors provides users a replicable, containerized way to roll out HPC applications from development to production.

Containers from the NGC container registry work across a wide variety of additional platforms, including Amazon EC2, Google Cloud Platform, Microsoft Azure, Oracle Cloud Infrastructure, NVIDIA DGX systems, and select NVIDIA TITAN and Quadro GPUs.

Read More: Interview with Jeffrey Kofman, CEO and Founder at Trint

NGC Containers Deployed by Premier Supercomputing Centers

NGC container registry users represent a variety of industries and disciplines, from large corporations to individual researchers. Among these are two of the top education and research facilities in the country: Clemson University and the University of Arizona.

Research facilitators for Clemson’s Palmetto cluster continually received requests to support multiple versions of the same applications. Installing, upgrading and maintaining all of these different versions was time consuming and resource intensive. Maintaining all of these different versions bogged down the support staff and hampered user productivity.

Read More: The Top 5 “Recipes” That Give AI Projects a Higher Likelihood of Success

The Clemson team successfully tested HPC and deep learning containers such as GROMACS and TensorFlow from the NGC container registry on their Palmetto system. Now they recommend users leverage NGC containers for their projects. Additionally, the containers run in their Singularity deployment, making it easier to support across their systems. With NGC containers, Clemson’s Palmetto users can now run their preferred application versions without disrupting other researchers or relying on the system admins for deployment.

At the University of Arizona, system admins for the Ocelote cluster would be inundated with update requests whenever new versions of the TensorFlow deep learning framework came out. Due to the complexity of installing TensorFlow on HPC systems — which can take as long as a couple of days — this became a resource issue for their modest-sized team and often led to unhappy users.

Read More: Fluor Uses IBM Watson to Deliver Predictive Analytics Capability for Megaprojects

“Our cluster environment by necessity does not get updated fast enough to keep up with the requirements of the deep learning workflows,” says Chris Reidy, principal HPC systems administrator at the University of Arizona. “We made a significant investment in NVIDIA GPUs, and the NGC containers leverage that investment. We have significant interest in various fields ranging from traditional molecular dynamics codes like NAMD to machine learning and deep learning, and the NGC containers are built with an optimized and fully tested software stack to provide a quick start to getting research done.”

Reidy tested various HPC, HPC visualization and deep learning containers from NGC in Singularity on their cluster. Following instructions available in the NGC documentation, he was able to easily get the NGC containers up and running. They’re now the preferred way of running these applications.

Read More: The AI Gold Rush: How to Make Money off AI and Machine Learning!